NEWS

EnCORE in the News : 2023

December 20, 2023 | EnCORE

The Rising Stars in Data Science workshop was hosted November 13-14 by the University of

Chicago in collaboration with the University of California, San Diego – EnCORE and HDSI. The

conference brought together an exceptional selection of Ph.D. students and postdocs to share

their latest research findings and discuss emerging trends and challenges.

The EnCORE team, in collaboration with UChicago, put in a tremendous effort in the planning,

production, and application review process of the Rising Stars workshop. Additionally, the

EnCORE team was responsible for focusing the aim of the workshop to increase representation

and diversity in data science by providing a platform to receive invaluable career mentoring from

EnCORE PIs and other esteemed faculty.

Read more about the event recap here.

Rising Stars application deadlines and conference dates for 2024 will be shared in the early part of 2024.

December 2, 2023 | EnCORE

Last Saturday, Saura Naderi of HDSI Lab 3.0 and EnCORE, brought together a group of elementary school students for an immersive workshop on robotics and creativity. The workshop’s main focus was the fusion of traditional arts and crafts with robotics, challenging young minds to explore the intersection of art and technology. Throughout the day, students worked with their parents or guardians, delving into the fundamentals of coding and circuits while creating their own robotic arts and crafts projects.

Learn more about the outreach event here.

November 10, 2023 | EnCORE

The Institute for Emerging CORE Methods in Data Science (EnCORE) welcomes proposals for extended research visits between 2024-2025. Each extended research visit proposal needs to identify 3-5 researchers who will be spending 2-4 weeks at the institute working on their proposed research theme.

Foundational questions in all areas of Computer Science, Data Science, and AI are within scope. A proposal may aim to solve long-standing open questions in an established area or identify new theoretical directions in an emerging application area, or enable bridging between theory and practice among others. Moreover, each team will be organizing a workshop (3 to 5 days) during their stay at UCSD related to the research theme, bringing in additional participants (up to 20 including the organizers) to facilitate further dialogues. Proposals from industry are welcome and encouraged.

Learn more about the program and the application process here.

October 12, 2023 | NSF

October 2, 2023 | NSF

Multiple postdoctoral fellowship opportunities are available with The Institute for Emerging CORE Methods in Data Science (EnCORE), a TRIPODS Phase II institute funded by the National Science Foundation. The EnCORE Institute is a collaboration of researchers between UC San Diego, UCLA, UT Austin and Penn. The postdoctoral fellow will have options to be in one or more of these universities and collaborate with EnCORE PIs across disciplines of theoretical computer science and engineering, mathematics, statistics, and applications to domain sciences.

Postdoctoral team members will also have mentorship opportunities and are expected to participate and organize workshops, seminars and other activities of the EnCORE Institute.

The candidates are encouraged to work with multiple EnCORE faculty members at one or more participating universities. The applicants should have a strong background and a doctorate (by the start date) in a related field of Mathematics, Statistics, Computer Science, or Electrical Engineering. We encourage applications from underrepresented minorities in STEM.

All application materials including letters of recommendation should be submitted by January 1, 2024 for full consideration, however the application website will remain open till the positions are filled.

Learn more about the fellowship and application requirements here.

September 13, 2023 | NSF

Join the 2-day EnCORE tutorial “Characterizing and Classifying Cell Types of the Brain: An Introduction for Computational Scientists” with Michael Hawrylycz, Ph.D., Investigator, Allen Institute.

This tutorial will take place on March 21 – 22, 2024 at UC San Diego in the Computer Science and Engineering Building in Room 1242. There will be a Zoom option available as well.

Learn more about the tutorial by visiting this event page.

April 4, 2023 | NSF

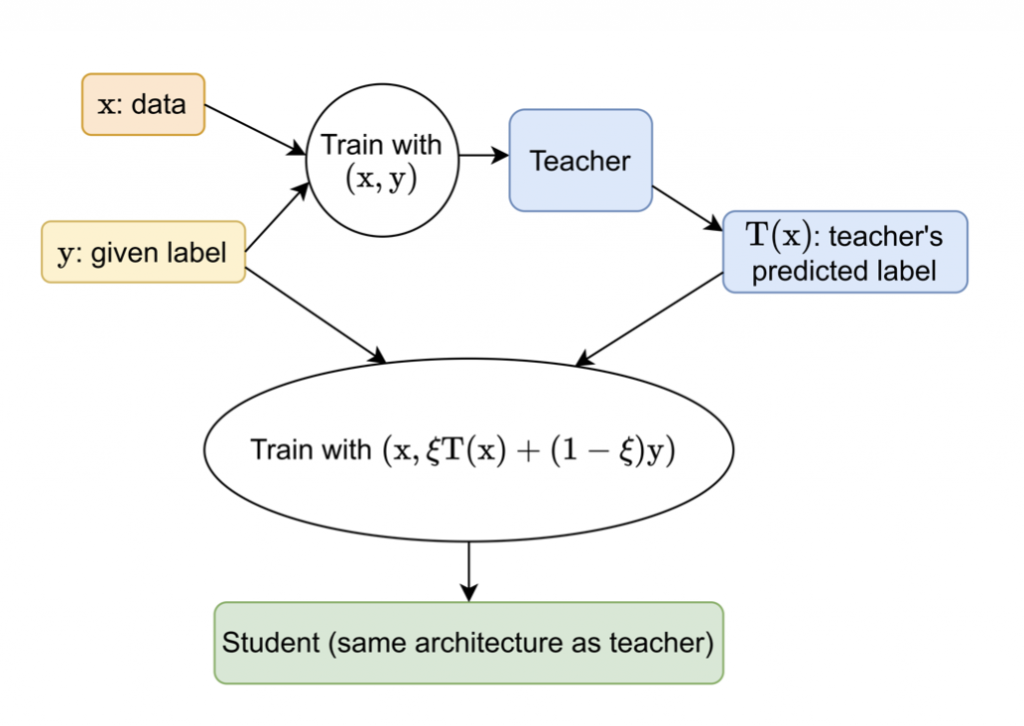

Self-distillation (SD) is a technique where a “teacher” model trains in a standard way and then uses the teacher’s predictions with the original labels to train a “student” model with the same architecture as the teacher. The student often outperforms the teacher, even when trained on the same examples, with the same model architecture and the same training process. In this study, Rudrajit Das (EnCORE graduate RA and Ph.D. student) and Sujay Sanghavi (EnCORE Co-PI) explore why and when a student outperforms the teacher in linear and logistic regression when given labels are corrupted with noise. They also formalized the limitations of SD and demonstrated the efficacy of their work on various academic datasets with different label corruption models.

February 24, 2023 | NSF

Aaron Roth, Henry Salvatori Professor of Computer & Cognitive Science in Computer and Information Science (CIS), and Michael Kearns, National Center Professor of Management & Technology in CIS recently led a PNAS study that demonstrated how private data of U.S. citizens can be reconstructed from publicly released Census statistics. The study’s authors present an attack that proves that this reconstruction is well beyond chance. By ranking the reconstructed data according to the likelihood of authenticity and by comparing to baselines corresponding to distributional knowledge of various strengths, the authors showcase how easily private data can be exposed.

February 14, 2023 | NSF

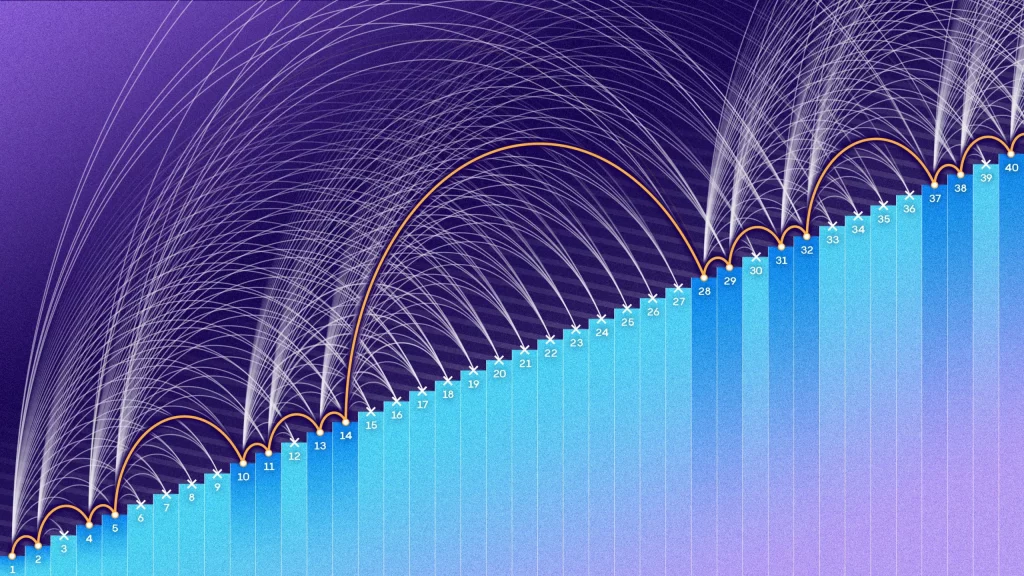

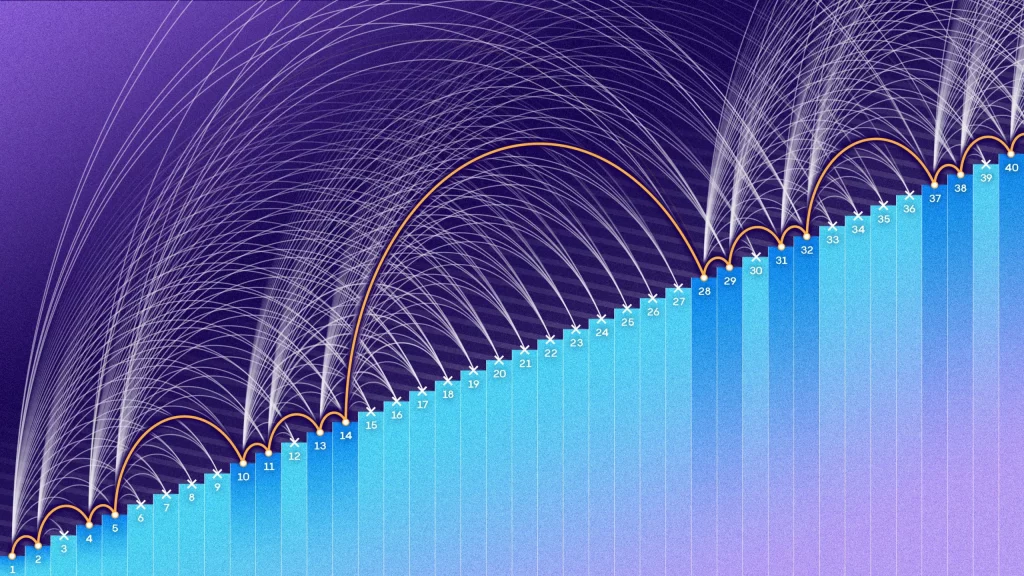

Raghu Meka (EnCORE Faculty), along with Zander Kelley, have achieved a groundbreaking breakthrough in mathematics. Their latest research paper, “Strong Bounds for 3-Progressions,” presents their findings on proving Behrend-type bounds for 3-term arithmetic progressions (3APs). The authors introduce innovative analytical techniques to tackle relevant queries in the finite-field domain, which they successfully adapt to the more intricate integer domain.

October 12, 2023 | NSF